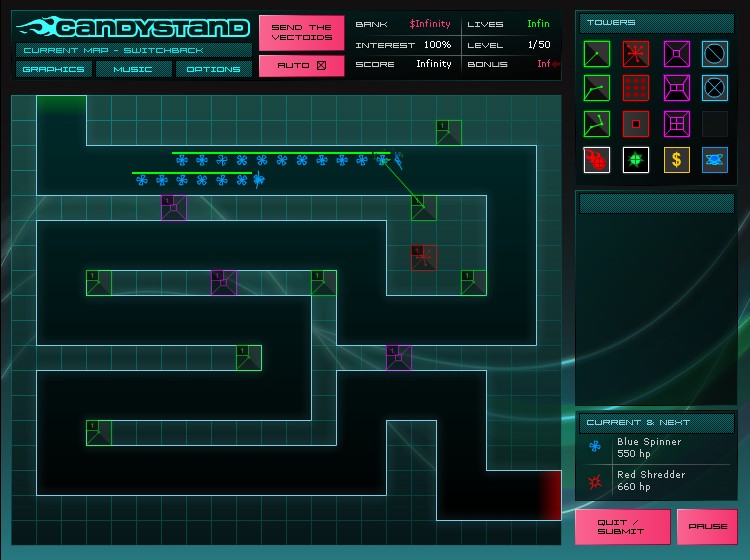

Damage and Range Booster towers can be placed on a grid to increase the stats of other towers around it. Every few waves you’ll also be rewarded with a Yellow Energy Cell, which you can use to purchase one of three Boosts. The in-game guide lists each tower in detail. Blue Ray towers drain power and cause Vectoids to slow down. The Red Spammer fires heat-seeking rockets at random targets in range. The Green Lasers, for example, lock onto one Vectoid and deal ongoing damage. Success is not based solely on the types of tower you select, but where you place them and what upgrade and bonus options you take.Īt your disposal are eleven flavors of towers, each of which can be upgraded to level 10 (increasing the tower’s damage and range). You do this by constructing towers along the path to attack oncoming Vectoids. It is interesting to see how strong a deep neural network in AlphaGo can become, i.e., to approximate optimal value function and policy, and how soon a very strong computer Go program would be available on a mobile phone.In this entry in the popular tower defense genre, players aim to eliminate the Vectoid threat before they reach the end of the path.

However, we are still far away from attaining artificial general intelligence (AGI). Several of these, like planning, scheduling, and constraint satisfaction, are constraint programming problems.ĪlphaGo has made tremendous progress, and sets a landmark in AI. AlphaGo Zero blog at blog/alphago-zero-learning-scratch/ mentions the following structured problems: protein folding, reducing energy consumption, and searching for revolutionary new materials. recommend the following applications: general game-playing (in particular, video games), classical planning, partially observed planning, scheduling, constraint satisfaction, robotics, industrial con- trol, and online recommendation systems. On the other hand, AlphaGo algorithms, especially the underlying techniques, namely, deep learn- ing, RL, MCTS, and self-play, have many applications. As such, it is nontrivial to directly apply AlphaGo Zero algorithms to such applications. For example, in healthcare, robotics, and self driving problems, it is usually hard to collect a large amount of data, and it is hard or impossible to have a close enough or even perfect model. However, the data can be generated by self play, with a perfect model or precise game rules.ĭue to the perfect model or precise game rules for computer Go, AlphaGo algorithms have their limitations. ĪlphaGo Zero requires huge amount of data for training, so it is still a big data issue. ELF OpenGo is a reimplementation of AlphaGoZero/AlphaZero using ELF, at. The computation cost is probably too formidable for researchers with average computation resources to replicate AlphaGo Zero. The inputs to AlphaGo Zero include the raw board representation of the position, its history, and the color to play as 19 × 19 images game rules a game scoring function invariance of game rules under rotation and reflection, and invariance to colour transposition except for komi.ĪlphaGo Zero utilizes 64 GPU workers and 19 CPU parameter servers for training, around 2,000 TPUs for data generation, and 4 TPUs for game playing. Thus it does not need to predict their moves correctly. However, it does not need to mimic human professional plays. It may confirm that human profes- sionals have developed effective strategies. MCTS can be viewed as a policy improvement operator.ĪlphaGo Zero has attained a superhuman level perfromance.

AlphaGo Zero follows a generalized policy iteration procedure, in which, heuristic search, in particular, MCTS, plays a critical role, but within the scheme of RL generalized policy iteration, as illustrated in the pseudo code in Algorithm 12. However, it performs policy evaluation and policy improvement, as one iteration in generalized policy iteration.ĪlphaGo Zero is not only a heuristic search algorithm.

Optimizing the loss function l is supervised learning. The game score is a reward signal, not a supervision label. It is neither supervised learning nor unsupervised learning. Discussions about AlphaGo Zero in Deep reinforcement learning:ĪlphaGo Zero is an RL algorithm. David closes the lecture with a brief discussion of deep RL beyond games.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed